Streaming Audio With AVAudioEngine

How to build a streaming audio player that allows adjusting time and pitch of a song downloaded from the internet in realtime.

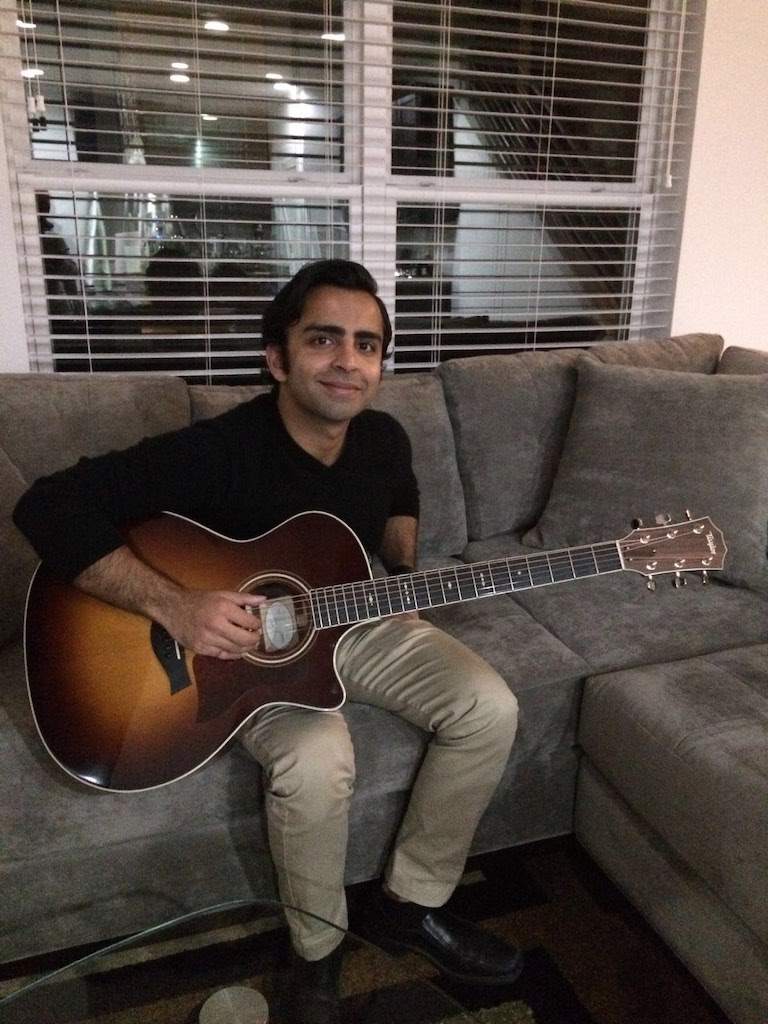

I'm an experienced software engineer, manager, and open source author currently based in Los Angeles, CA and Austin, TX. I love creating new, innovative products and building amazing teams with amazing individuals. When I'm not coding you'll find me rock climbing, playing music, and hiking with my dog Winston. You can read more about my professional experience in my About page.

How to build a streaming audio player that allows adjusting time and pitch of a song downloaded from the internet in realtime.